Last Updated: June 01, 2026

Advanced

Making computer generated text mimic human speech is fascinating and actually not that difficult for an effect that is sometimes convincing, but certainly entertaining. Markov Chain’s is one way to do this. It works by generating new text based on historical texts where the original sequencing of neighboring words (or groups of words) is used to generate meaningful sentences. Read the below guide on how to code a Markov Chain text generator (code example in python) including explanation of the concept.

What’s really interesting, is that you can take historical texts of a person, then generate new sentences which can sound similar to the way that person speaks. Alternatively, you can combine texts from two different people and get a mixed “voice”.

I played around this with texts of speeches from two great presidents:

What my Markov Chain generated which was “trained” using the combination of texts from Obama speeches and Bartlet scripts, is as follows:

- ‘Can I burn my mother in North Carolina for giving us a great night planned.’

- ‘And so going forward, I believe that we can build a bomb into their church.’

- ‘’Charlie, my father had grown up in the Situation Room every time I came in.’’

- ‘This campaign must be ballistic.’,

Python developer and educator with 15+ years building production systems across data engineering, web APIs, and AI tooling. Founder of Python How To Program — 270+ in-depth tutorials covering the modern Python stack.

What is a Markov Chain in the context of a text generation?

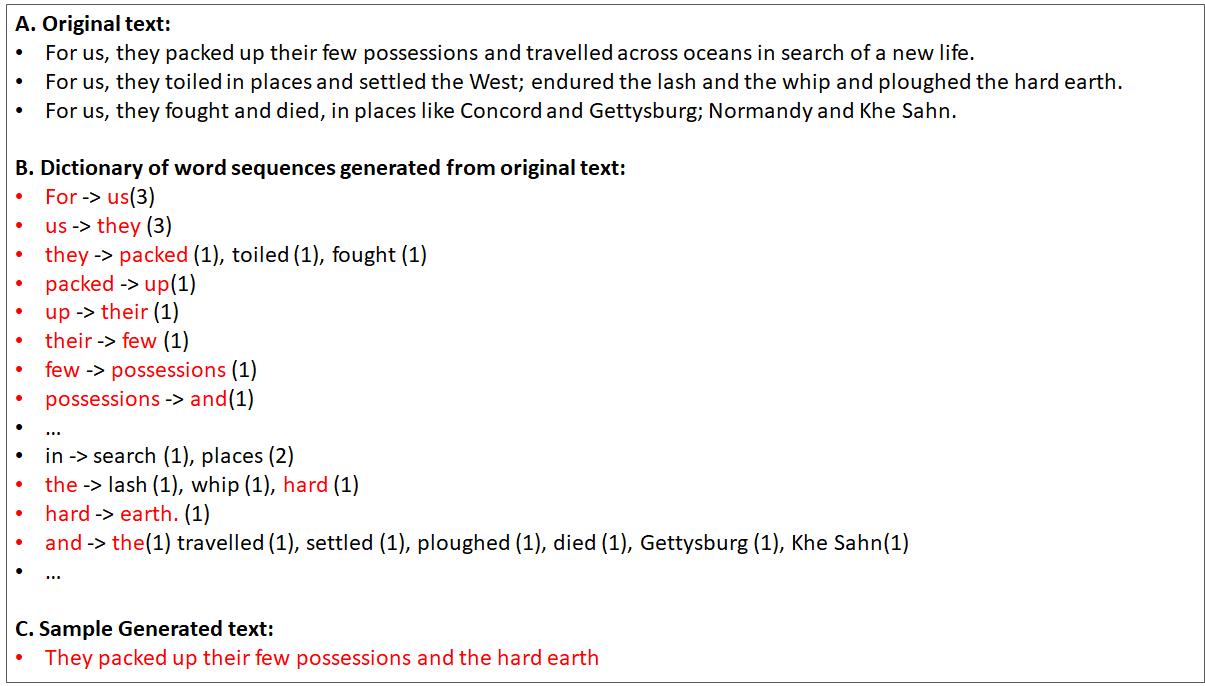

For a more technical explanation, I think you can find plenty of resources out there. In simple terms, it is an algorithm which is used to generate a new outcome from a weighted list of words based on historical texts. Now that’s rather abstract. In more practical terms, in the scenario for text generation, it is a way to use historical texts, chop it up into individual words (or sets of words), and then randomly chose a given word then randomly chose the next likely words based on historical sequences. For example:

This doesn’t just apply in text as well (although one of the most popular applications is in your smart phone where there’s predictive text), it can be used for any scenario where you use historical information to define next steps for a given state. For example, you could codify a given stock market pattern (such as the % daily changes for the last 30 days), then use that to see historically what was the likely next day outcome (example only.. I’m very doubtful how effective it would be).

Why are Markov Chain Text Generators so fun?

I’ve always wanted to build a text generator as it’s just an awesome way to see how you could mimic intelligence using a very cheap shortcut. You’ll see the algorithm below, and it is super simple. The other fact is that, like above example, you can use it to mix the ‘voice’ from two different persons and see the outcome.

How does the Markov Chain Text Generator work?

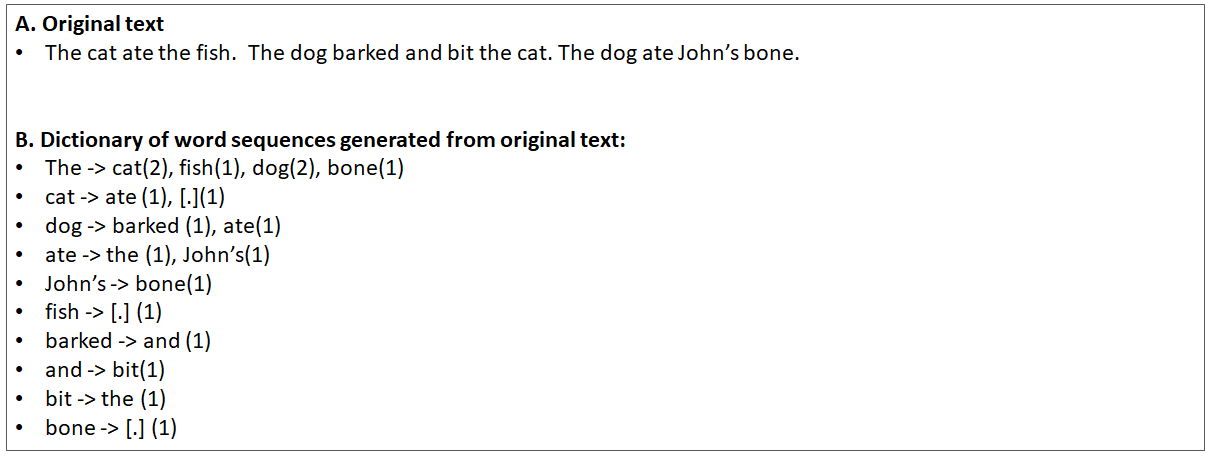

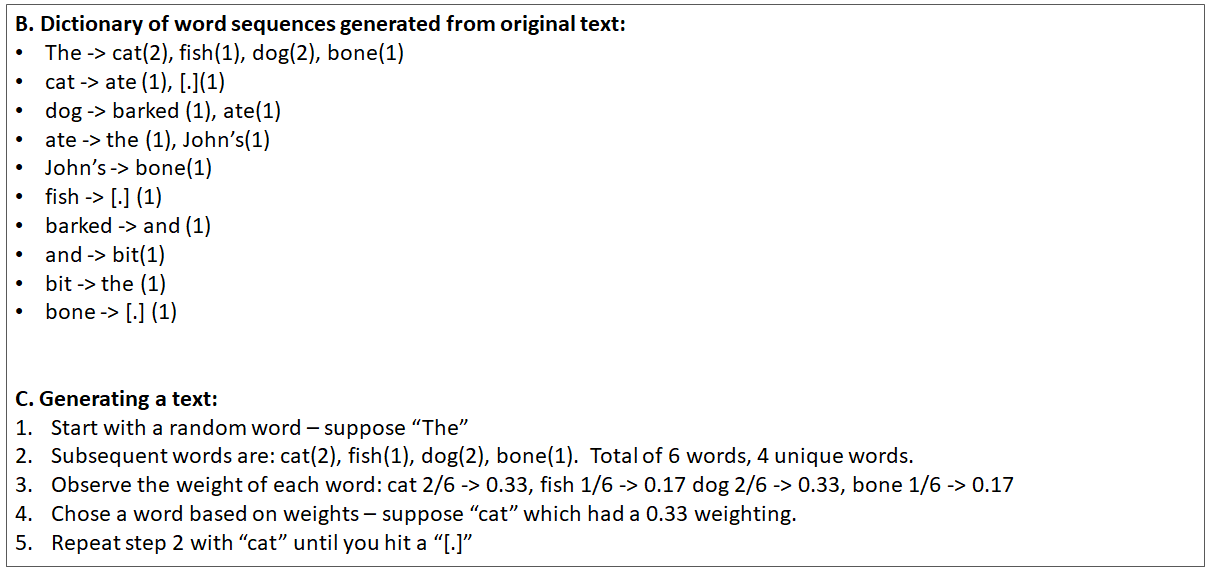

There are two phases for text generation with Markov Chains. There’s first the ‘dictionary build phase’ which involves gathering the historical texts, and then generating a dictionary with the key being a given word in a sentence, and then having the resultant being the natural follow-up words.

The second is the execution, where you start from a given word, then use that word to see what the next word would be in a probabilistic way. For example:

Now, there are some tricks which you need to be mindful of ( I found this out the hard way):

- You can’t start from any random word — if you do, then you’ll get sentences like this: “ate the cat.” . You have to keep track of “starting words” to keep things simple — hence you can have: “John ate the cat”.

- Don’t ignore punctuation— if you do remove punctuation, you’ll get sentence like this: “The dog barked at John cat”. Instead keep them there so that you can have a better chance to have a more realistic sentence — i.e. “The dog barked at John’s ca”

- End on a full-stop word. When you go through and start from a word, then find the next word, then find the next word and so on, you can continue until you reach a specified length, but then you’ll end up stopping in mid-sentence such as this: “The cat ate John’s”. Instead, simply end when you have a word that has a full stop (another reason not to remove the punctuation) — i.e. “The cat ate John’s boots.”

Markov Chain Example Code Source texts

I played around with different texts including: Eddie Murphy stand-up routines, Donald Trump tweets, Obama speeches, and Jed Bartlet dialogue. You can find the the markov chain example source text here. It’s great to use one source and then generate the dictionary, but then you can mix and match and use two sources (e.g. Obama and Bartlet) and then create the one dictionary file. Then when you traverse the dictionary you get the both voices.

It is important to make sure that you can balance the text — e.g. if you had a 8000 text from Obama and only 1000 text from Eddie Murphy, it’s likely that you would see more of the Obama words. Of course, when you build the dictionary, you can also add some artificial weighting towards the lighter text source to balance things out.

Markov Chain Summary

The Markov Chain text generator is not perfect — you’ll see when you create your own, that some text is just gibberish. The more text that you have the better. Secondly, using single words is not helpful in the dictionary — you should use groups of 2–3 words. The actual number depends on how much historical text you have.

You can find all the python code, source texts and Markov Chain python example code here. Good luck!

Subscribe to our newsletter

How To Use Python beartype for Runtime Type Checking

Intermediate

You write a function that expects a list[str], add a type hint, and feel good about it. Six months later a colleague passes in a list of integers, Python happily accepts it, and the bug surfaces three function calls downstream as an AttributeError that points nowhere near the real problem. Static type checkers like mypy would have caught this — if the entire codebase uses them consistently, if CI runs them, and if the calling code is also type-annotated. In practice, those conditions often don’t hold. beartype fills the gap by enforcing type hints at runtime, at the exact moment the function is called.

beartype is a pure-Python library that decorates your functions and checks argument types in real time. Unlike mypy, it doesn’t require a separate analysis pass or a clean type-annotated codebase. You add one decorator, and the next time someone passes the wrong type, you get a clear BeartypeException at the call site — not a cryptic error three layers down. It supports the full range of Python type hints including Optional, Union, list[str], dict[str, int], dataclasses, and even complex generics. Install it with pip install beartype.

In this article we will cover how beartype works and why it is faster than competing libraries, how to decorate functions and methods, how to handle complex nested types, how to configure beartype with BeartypeConf, how to apply it project-wide with a single import, and how to use it alongside static type checkers. By the end you will have a practical toolkit for catching type violations at runtime in any Python project.

beartype Quick Example: Catching a Type Violation at Call Time

Here is the minimum setup that demonstrates what beartype does — decorate a function, call it with the wrong type, and watch beartype raise an informative error immediately:

# quick_beartype.py

from beartype import beartype

@beartype

def greet(name: str, repeat: int) -> str:

return (name + " ") * repeat

# Correct call -- works fine

print(greet("Hello", 3))

# Wrong type for repeat -- beartype raises immediately

print(greet("Hello", "3"))

Output:

Hello Hello Hello

BeartypeCallHintParamViolation: @beartyped greet() parameter repeat='3' violates type hint

<class 'int'>, as str '3' not instance of int.

The decorator wraps greet() with a thin type-checking layer. Correct calls pass through at near-native speed. Wrong-type calls raise BeartypeCallHintParamViolation with an error message that names the function, the parameter, the value passed, and the expected type. No configuration needed — just add @beartype. The sections below cover the full feature set.

What Is beartype and Why Use It?

beartype is a runtime type-checking library for Python. It reads the type annotations you have already written and enforces them when your code runs. The core idea is that type hints in Python are, by default, documentation — the interpreter ignores them completely. beartype turns them into actual enforcement without requiring you to write manual isinstance() checks everywhere.

The key design choice that sets beartype apart from similar libraries is performance. Most runtime type checkers perform deep, recursive checks: if you annotate a parameter as list[str], they walk every element of the list to confirm it is a string. For a list with 10,000 items this adds up quickly. beartype uses O(1) amortized checking — it samples a random element rather than walking the whole structure, giving you statistical confidence without linear overhead. The library itself claims this makes it the fastest pure-Python runtime type checker available.

| Feature | beartype | typeguard | pydantic (validation) |

|---|---|---|---|

| Check speed | O(1) amortized | O(n) deep check | O(n) full parsing |

| Decorator style | @beartype | @typechecked | @validate_call |

| Project-wide mode | Yes (BeartypeConf + importlib) | Limited | No |

| Supports generics | Full PEP 484/585/604 | Full | Full (own system) |

| Zero-dep install | Yes | Yes | No (Rust extension) |

beartype suits projects that already have type hints and want runtime enforcement as a safety net — especially in library code that receives external input, plugin systems, or long-running services where a type error would otherwise surface far from the call site.

Installing beartype

beartype has no dependencies beyond the Python standard library. Install it with pip into your project environment:

# install_beartype.sh

pip install beartype

Output:

Successfully installed beartype-0.18.5

Verify the installation and check the version:

# verify_beartype.py

import beartype

print(beartype.__version__)

Output:

0.18.5

beartype supports Python 3.8 and above. It has no C extensions, so it installs instantly on any platform including PyPy and Anaconda environments.

Decorating Functions and Methods

The primary interface is the @beartype decorator. Apply it to any function or method that has type annotations, and beartype will check all annotated parameters and the return value on every call.

# function_decorating.py

from beartype import beartype

from typing import Optional

@beartype

def send_email(to: str, subject: str, body: str, cc: Optional[str] = None) -> bool:

"""Send an email and return True on success."""

print(f"Sending to {to}: {subject}")

if cc:

print(f"CC: {cc}")

return True

# All valid -- passes through

send_email("alice@example.com", "Hello", "Hi there")

send_email("bob@example.com", "Update", "See attached", cc="carol@example.com")

# Invalid -- cc must be str or None, not int

send_email("dave@example.com", "Oops", "Body", cc=42)

Output:

Sending to alice@example.com: Hello

Sending to bob@example.com: Update

CC: carol@example.com

BeartypeCallHintParamViolation: @beartyped send_email() parameter cc=42 violates type hint

typing.Optional[str], as int 42 not instance of str.

beartype understands Optional[str] (which is equivalent to Union[str, None]) and correctly accepts None as a valid value while rejecting integers. The decorator adds no overhead when arguments are the correct type — the check for a simple str or int parameter costs roughly the same as a single isinstance() call.

You can also decorate class methods. beartype handles self automatically — it skips the first parameter if it has no annotation, which is the standard convention for instance methods:

# class_methods.py

from beartype import beartype

class DataLoader:

@beartype

def load(self, path: str, encoding: str = "utf-8") -> list:

"""Load lines from a file."""

with open(path, encoding=encoding) as f:

return f.readlines()

@beartype

def count_lines(self, lines: list, min_length: int = 0) -> int:

return sum(1 for line in lines if len(line) >= min_length)

loader = DataLoader()

lines = loader.load("data.txt") # str path -- fine

loader.count_lines(lines, min_length=5) # int min_length -- fine

loader.count_lines(lines, min_length="5") # str instead of int -- fails

Output:

BeartypeCallHintParamViolation: @beartyped DataLoader.count_lines() parameter

min_length='5' violates type hint <class 'int'>, as str '5' not instance of int.

The fully qualified method name (DataLoader.count_lines) appears in the error, which makes it easy to locate in large codebases. Parameters with default values are still checked — beartype verifies the type at call time regardless of whether the caller supplies the argument or the default is used.

Checking Complex and Generic Types

beartype supports the full range of Python type hints introduced across PEP 484, 585, and 604. This includes parameterized generics like list[str], dict[str, int], tuple[int, ...], and set[float], as well as Union, Optional, Callable, and custom Protocol classes. For collection types, beartype uses its O(1) strategy — it samples one random element rather than checking every item, giving you fast probabilistic coverage.

# complex_types.py

from beartype import beartype

from collections.abc import Callable

@beartype

def process_records(

records: list[dict[str, int]],

transform: Callable[[int], int],

max_value: int | None = None,

) -> list[int]:

"""Apply transform to each record's values."""

results = []

for record in records:

for val in record.values():

transformed = transform(val)

if max_value is None or transformed <= max_value:

results.append(transformed)

return results

data = [{"a": 1, "b": 2}, {"c": 3}]

doubled = process_records(data, lambda x: x * 2)

print(doubled)

# Pass a string in the list -- beartype detects the wrong type

bad_data = [{"a": "not-an-int"}]

process_records(bad_data, lambda x: x * 2)

Output:

[2, 4, 6]

BeartypeCallHintParamViolation: @beartyped process_records() parameter records=[{'a': 'not-an-int'}]

violates type hint list[dict[str, int]], as dict key 'a' value 'not-an-int' not instance of int.

Notice the modern union syntax int | None (PEP 604, Python 3.10+) in place of Optional[int] -- beartype supports both styles. For the Callable type hint, beartype verifies that the argument is callable but does not check the callable's own signature (that would require calling it, which beartype intentionally avoids). The list[dict[str, int]] check samples a random element from the list, then samples a random key-value pair from that dict -- two O(1) checks that together cover the nested structure.

# union_types.py

from beartype import beartype

from typing import Union

@beartype

def parse_id(value: Union[str, int]) -> str:

"""Accept either a string or integer ID and return a string."""

return str(value)

print(parse_id("abc-123")) # str -- fine

print(parse_id(42)) # int -- fine

print(parse_id(3.14)) # float -- not in Union

Output:

abc-123

42

BeartypeCallHintParamViolation: @beartyped parse_id() parameter value=3.14 violates type hint

typing.Union[str, int], as float 3.14 not instance of str or int.

The error message correctly identifies that neither branch of the Union matched. For deeply nested unions or generics, beartype generates clear messages that show exactly which level of the type hierarchy failed.

Checking Return Values

beartype checks return type annotations just as it checks parameter annotations. If a function's return annotation says str but the function returns an integer, beartype raises BeartypeCallHintReturnViolation the moment the function exits. This is especially useful for catching bugs in functions that take multiple code paths -- only the path that returns the wrong type will fail, and you will know immediately which function is responsible.

# return_checking.py

from beartype import beartype

@beartype

def get_status_code(success: bool) -> int:

"""Return an HTTP-style status code."""

if success:

return 200

return "error" # Bug: should return 500, not a string

print(get_status_code(True)) # Returns 200 -- fine

print(get_status_code(False)) # Returns "error" -- beartype catches it

Output:

200

BeartypeCallHintReturnViolation: @beartyped get_status_code() return 'error' violates type hint

<class 'int'>, as str 'error' not instance of int.

This kind of bug -- a function returning the wrong type from one of its branches -- is common in older Python codebases that were written before type hints and then annotated later without fully verifying every code path. beartype will catch it the first time that branch runs in tests or production, rather than letting it propagate silently.

Configuring beartype with BeartypeConf

The default @beartype decorator uses sensible defaults, but BeartypeConf lets you tune the behavior. The most useful option is is_debug=True, which prints the generated type-checking wrapper so you can see exactly what beartype is doing under the hood. Other options control whether to raise on violations, emit warnings instead, or enable strict mode.

# beartype_conf.py

from beartype import beartype, BeartypeConf

# Warning mode: print a warning but don't raise an exception

warn_conf = BeartypeConf(violation_type=UserWarning)

@beartype(conf=warn_conf)

def multiply(a: int, b: int) -> int:

return a * b

import warnings

with warnings.catch_warnings(record=True) as w:

warnings.simplefilter("always")

result = multiply(3, "4") # Wrong type -- triggers warning, not exception

print(f"Result: {result}") # Still executes!

if w:

print(f"Warning: {w[0].message}")

Output:

Result: 333333333333333333333333333333333333333333333333333333333333333

Warning: @beartyped multiply() parameter b='4' violates type hint <class 'int'>,

as str '4' not instance of int.

Warning mode is useful during a gradual migration phase when you want to audit type violations in a running application without breaking it immediately. You can log the warnings, collect them over time, and fix the call sites before switching back to the default exception-raising mode. The violation_type parameter accepts any Warning subclass.

# debug_mode.py

from beartype import beartype, BeartypeConf

debug_conf = BeartypeConf(is_debug=True)

@beartype(conf=debug_conf)

def add(x: int, y: int) -> int:

return x + y

Output (abbreviated):

# beartype generated wrapper (simplified):

def add(__beartype_object, x: int, y: int) -> int:

if not isinstance(x, int): raise BeartypeCallHintParamViolation(...)

if not isinstance(y, int): raise BeartypeCallHintParamViolation(...)

__return = __beartype_object(x, y)

if not isinstance(__return, int): raise BeartypeCallHintReturnViolation(...)

return __return

The debug output shows exactly what beartype compiles into -- for simple types like int it is nearly identical to hand-written isinstance() checks. This transparency makes it easy to understand the performance impact and trust that the checks are doing what you expect.

Applying beartype Project-Wide

Decorating every function individually is tedious for large codebases. beartype provides a project-wide activation mechanism using Python's import system hook. Add a single import at the top of your entry point and beartype automatically decorates every function in every module you import afterward -- no per-function decorator needed.

# main.py (entry point -- put this import first)

from beartype.claw import beartype_this_package

# Call this before importing anything else from your package

beartype_this_package()

# Now all functions in your_package are automatically @beartype-decorated

from your_package import process_data, load_config

# Type violations will be caught without any explicit @beartype decorators

load_config(path=42) # int instead of str -- raises immediately

Output:

BeartypeCallHintParamViolation: @beartyped load_config() parameter path=42

violates type hint <class 'str'>, as int 42 not instance of str.

The beartype_this_package() call must come before the imports it should affect. beartype hooks into Python's importlib system and patches each module as it is loaded, so order matters. For packages you do not own (third-party libraries), use beartype_packages("your_package") to target a specific package name explicitly. This project-wide approach is the most practical way to add runtime type checking to an existing codebase in one step.

Using beartype with Dataclasses

beartype integrates cleanly with Python dataclasses. Decorate the class with @beartype and it wraps the auto-generated __init__ method, so type violations are caught at instantiation time rather than hidden until the field is first used.

# beartype_dataclasses.py

from beartype import beartype

from dataclasses import dataclass

@beartype

@dataclass

class Product:

name: str

price: float

quantity: int

tags: list[str]

# Valid product -- all types match

p1 = Product(name="Widget", price=9.99, quantity=100, tags=["sale", "new"])

print(p1)

# Invalid: price should be float, not a string

p2 = Product(name="Gadget", price="free", quantity=10, tags=[])

Output:

Product(name='Widget', price=9.99, quantity=100, tags=['sale', 'new'])

BeartypeCallHintParamViolation: @beartyped Product.__init__() parameter price='free'

violates type hint <class 'float'>, as str 'free' not instance of float.

Order matters when stacking decorators: @beartype must go above @dataclass because Python applies decorators from bottom to top. With that order, beartype sees the fully formed dataclass (including the generated __init__) and wraps it correctly. If you reverse the order, beartype sees the raw class before __init__ is generated and the type checking will not apply to construction.

Real-Life Example: A Typed Configuration Loader

Here is a practical project that combines beartype's decorator and complex type hints to build a safe configuration loader. The loader reads a JSON config file and enforces the expected schema at parse time -- any malformed config value raises a type error immediately rather than causing a confusing failure later in the application.

# config_loader.py

import json

from pathlib import Path

from beartype import beartype

from beartype.typing import TypedDict

class DatabaseConfig(TypedDict):

host: str

port: int

name: str

class AppConfig(TypedDict):

debug: bool

database: DatabaseConfig

allowed_hosts: list[str]

max_connections: int

@beartype

def load_config(path: str) -> AppConfig:

"""Load and validate application config from a JSON file."""

config_path = Path(path)

if not config_path.exists():

raise FileNotFoundError(f"Config file not found: {path}")

with config_path.open() as f:

data = json.load(f)

return data # beartype validates the return type against AppConfig

@beartype

def get_db_url(config: AppConfig) -> str:

"""Build a database URL from the config."""

db = config["database"]

return f"postgresql://{db['host']}:{db['port']}/{db['name']}"

@beartype

def check_host(config: AppConfig, host: str) -> bool:

"""Check whether a host is in the allowed list."""

return host in config["allowed_hosts"]

# --- Demo with a valid config ---

valid_config: AppConfig = {

"debug": False,

"database": {"host": "localhost", "port": 5432, "name": "mydb"},

"allowed_hosts": ["localhost", "myapp.example.com"],

"max_connections": 20,

}

print(get_db_url(valid_config))

print(check_host(valid_config, "localhost"))

print(check_host(valid_config, "attacker.com"))

# --- Demo: wrong type for port ---

bad_config = {

"debug": False,

"database": {"host": "localhost", "port": "5432", "name": "mydb"}, # port is str

"allowed_hosts": ["localhost"],

"max_connections": 10,

}

get_db_url(bad_config) # beartype catches port: str instead of int

Output:

postgresql://localhost:5432/mydb

True

False

BeartypeCallHintParamViolation: @beartyped get_db_url() parameter config[...] violates

type hint -- database.port expected int, got str '5432'.

This pattern is valuable for applications that load configuration at startup. Instead of crashing with an obscure TypeError or KeyError deep in the application logic, beartype raises a precise error at the boundary where the config enters your typed code. You can extend this example by adding more config fields to the TypedDict classes or by adding a validate_config() function that checks business logic (port range, non-empty hostnames) on top of the structural type checks beartype provides.

Frequently Asked Questions

Does beartype slow down my application significantly?

For simple types like str, int, float, and bool, beartype adds roughly the overhead of one isinstance() call per parameter -- typically under a microsecond. For complex generic types like list[str], beartype samples one random element rather than traversing the entire collection, keeping the check O(1) regardless of size. In practice, the overhead is negligible compared to network I/O, disk access, or any real computation your function performs. If you profile and find beartype is a bottleneck, you can disable it in production by setting BEARTYPE_IS_COLOR=0 or by using BeartypeConf(is_pep484_tower=False) to narrow the checks.

Should I use beartype instead of mypy?

They are complementary, not competing. mypy is a static type checker -- it analyzes your code without running it and catches type errors at development time. beartype is a runtime type checker -- it runs your code and catches type errors when functions are actually called. Use both: mypy catches the majority of errors before you run anything, and beartype catches the remaining cases where runtime data violates your annotations (external API responses, user input, config files). Think of mypy as a spell checker and beartype as a grammar checker -- they operate on different passes of the same text.

Can beartype check types in third-party library code?

beartype can only check code it wraps -- either via @beartype decorators or the importlib hook. It cannot check inside third-party libraries you call because it has no way to wrap their functions at import time unless you explicitly include them in beartype_packages(). However, you can protect your own code against bad values coming from third-party libraries by annotating your functions' return types carefully and decorating the boundaries between your code and library code with @beartype.

Can I customize the exception type beartype raises?

Yes. Pass BeartypeConf(violation_type=MyCustomError) to use your own exception class. The custom exception must inherit from either Exception or Warning. Raising a Warning subclass puts beartype into warning mode, where violations are logged but not raised. Raising an Exception subclass lets you integrate beartype violations into your existing error-handling infrastructure -- for example, returning a 400 Bad Request in a web framework by catching BeartypeCallHintViolation at the top-level request handler.

Does beartype support Protocol and ABC types?

Yes. beartype checks structural subtype compatibility for typing.Protocol classes using Python's runtime isinstance() mechanism, provided the protocol is decorated with @runtime_checkable. Abstract base classes from collections.abc (like Sequence, Mapping, Iterable) work out of the box because they support isinstance() natively. For protocols without @runtime_checkable, beartype falls back to a duck-typing check that verifies the object has the expected attributes and methods.

How do I disable beartype in production for zero overhead?

Set the environment variable BEARTYPE_IS_COLOR=0 before starting your application -- this only disables ANSI color codes in error messages, not checking. To fully disable checks, use BeartypeConf(strategy=BeartypeStrategy.O0) which makes every check a no-op while keeping the decorators in place. Alternatively, wrap your decorators in a helper that checks an environment variable: ENABLE_TYPE_CHECKS=false returns a no-op decorator, true returns @beartype. This gives you a single toggle for all checks in the codebase.

Conclusion

beartype bridges the gap between Python's optional type annotations and actual enforcement. It turns the str, int | None, and list[dict[str, int]] hints you have already written into executable contracts -- raising precise, actionable errors at the exact call site where the type violation occurs, rather than letting wrong values propagate silently until they cause a cryptic failure elsewhere. The decorator pattern is one line per function; the project-wide hook is one line per package; the performance overhead is O(1) regardless of data size.

The real-life config loader example shows the most practical use case: enforcing structure at the boundary where external data (JSON files, API responses, user input) enters your typed Python code. Extend that example by adding more TypedDict levels, swapping in BeartypeConf(violation_type=UserWarning) during a gradual migration, or combining it with beartype_this_package() to cover your entire application in one import. The official documentation at beartype.readthedocs.io covers advanced topics including custom validators, PEP 593 Annotated metadata, and the full BeartypeConf reference.

Related Articles

Further Reading: For more details, see the Python random module documentation.

Frequently Asked Questions

What is a Markov chain in simple terms?

A Markov chain is a mathematical model where the next state depends only on the current state, not on the sequence of events that preceded it. In text generation, this means the next word is predicted based only on the current word or phrase.

How does a Markov chain text generator work in Python?

A Python Markov chain text generator builds a dictionary of word transitions from training text. Each word maps to a list of words that follow it. The generator then randomly selects next words based on these observed probabilities to create new text.

What are the limitations of Markov chain text generation?

Markov chains produce text that can be grammatically inconsistent over long passages because they only consider local context (the previous few words). They lack understanding of meaning, coherence, and long-range dependencies that modern language models handle better.

Can I use Markov chains for purposes other than text generation?

Yes. Markov chains are used in weather prediction, stock market modeling, DNA sequence analysis, game AI, PageRank algorithms, and many simulation scenarios. Any system where transitions between states follow probabilistic rules can be modeled with Markov chains.

How do I improve the quality of Markov chain generated text?

Increase the chain order (use pairs or triples of words instead of single words as keys), use larger and higher-quality training data, add post-processing to fix grammar, and filter out nonsensical outputs. Higher-order chains produce more coherent text but require more training data.

Related Articles

- How To Generate Random Numbers in Python

- How To Create Vector Embeddings with Python

- How To Read and Write JSON Files

Continue Learning Python

Tutorials you might also find useful: